2026

Cross-Modal Dynamic Hypergraph Computation via Functional-Structural Brain Network for Brain Disease Diagnosis

Jingxi Feng, Heming Xu, Rundong Xue, Junhao Cai, Xudong Chen, Dong Zhang, Shaoyi Du

International Joint Conference on Artificial Intelligence (IJCAI) 2026

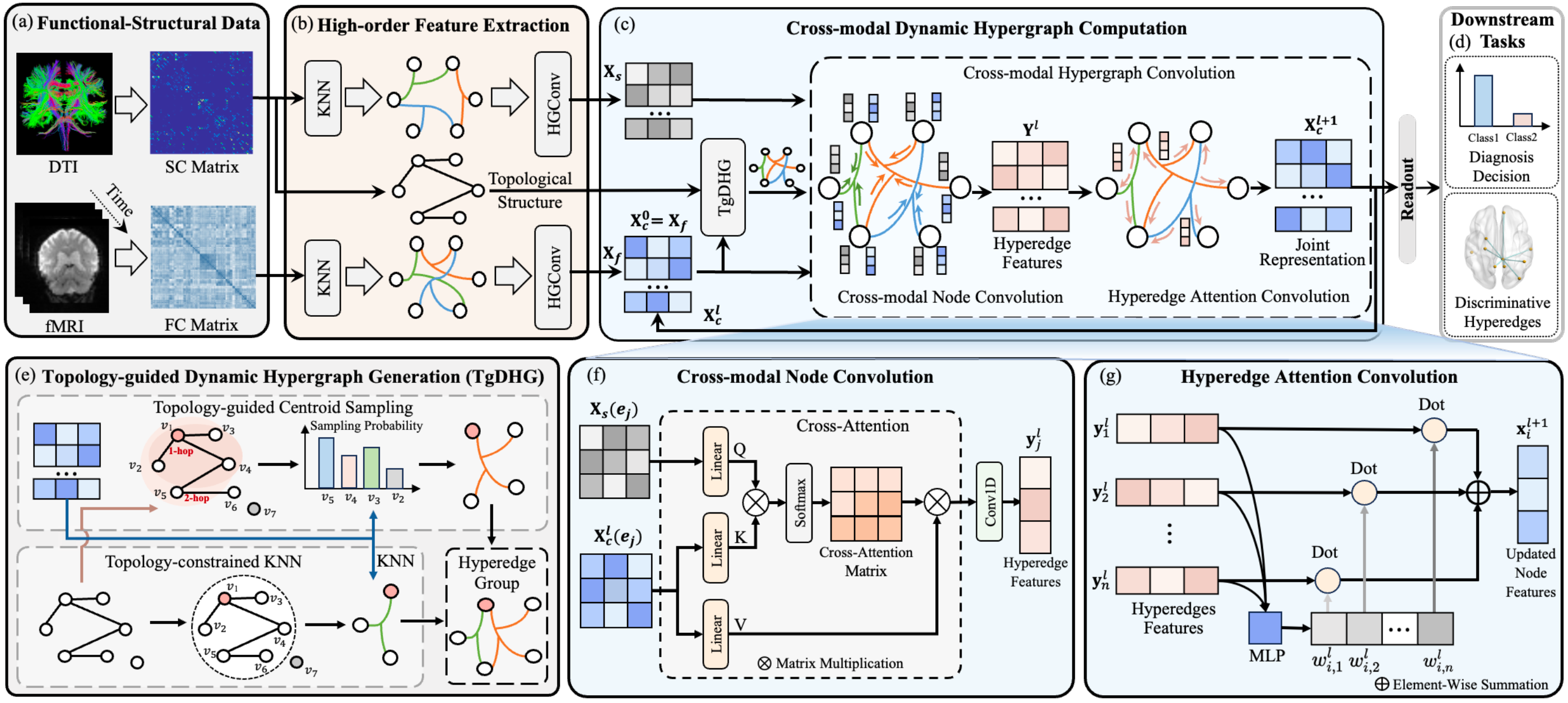

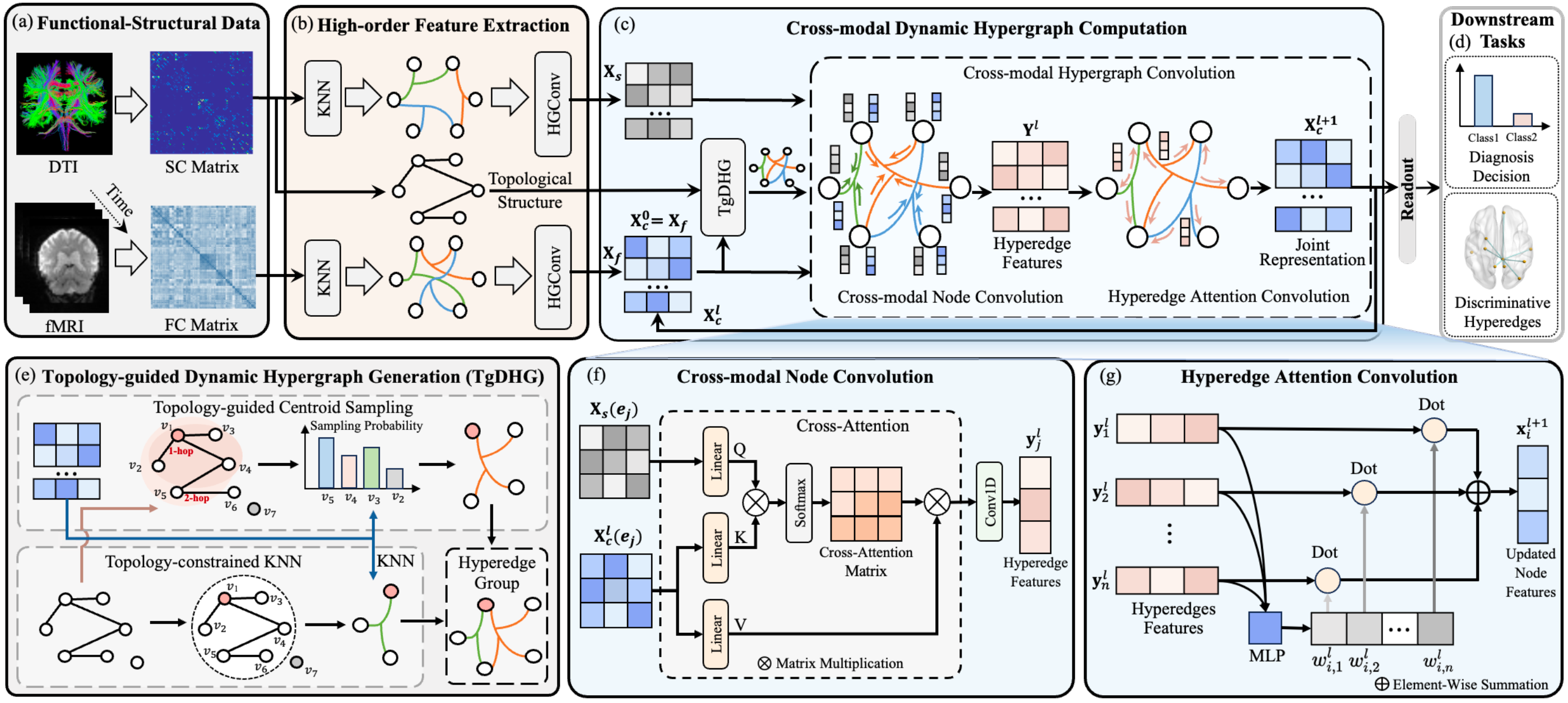

Cross-modal brain networks offer complementary functional and structural views for understanding inter-regional connectivity and diagnosing brain diseases, yet existing methods often underuse their joint topology and high-order associations. This paper proposes Cross-Modal Dynamic Hypergraph Computation (CDHGC), a framework that learns topology-guided high-order correlations in functional-structural brain networks. CDHGC first generates and dynamically optimizes topology-aware hypergraphs to reveal latent cross-modal relationships, then performs cross-modal hypergraph convolution with attention-based message passing to produce joint representations. Experiments on ADNI and ABIDE show that CDHGC surpasses state-of-the-art methods, while interpretability analysis identifies multimodal biomarkers related to brain disorders.

Cross-Modal Dynamic Hypergraph Computation via Functional-Structural Brain Network for Brain Disease Diagnosis

Jingxi Feng, Heming Xu, Rundong Xue, Junhao Cai, Xudong Chen, Dong Zhang, Shaoyi Du

International Joint Conference on Artificial Intelligence (IJCAI) 2026

Cross-modal brain networks offer complementary functional and structural views for understanding inter-regional connectivity and diagnosing brain diseases, yet existing methods often underuse their joint topology and high-order associations. This paper proposes Cross-Modal Dynamic Hypergraph Computation (CDHGC), a framework that learns topology-guided high-order correlations in functional-structural brain networks. CDHGC first generates and dynamically optimizes topology-aware hypergraphs to reveal latent cross-modal relationships, then performs cross-modal hypergraph convolution with attention-based message passing to produce joint representations. Experiments on ADNI and ABIDE show that CDHGC surpasses state-of-the-art methods, while interpretability analysis identifies multimodal biomarkers related to brain disorders.

Beyond Viewpoint Generalization: What Multi-View Demonstrations Offer and How to Synthesize Them for Robot Manipulation?

Boyang Cai, Qiwei Liang, Jiawei Li, Shihang Weng, Zhaoxin Zhang, Tao Lin, Xiangyu Chen, Wenjie Zhang, Jiaqi Mao, Weisheng Xu, Bin Yang, Jiaming Liang, Junhao Cai, Renjing Xu

arXiv preprint 2026

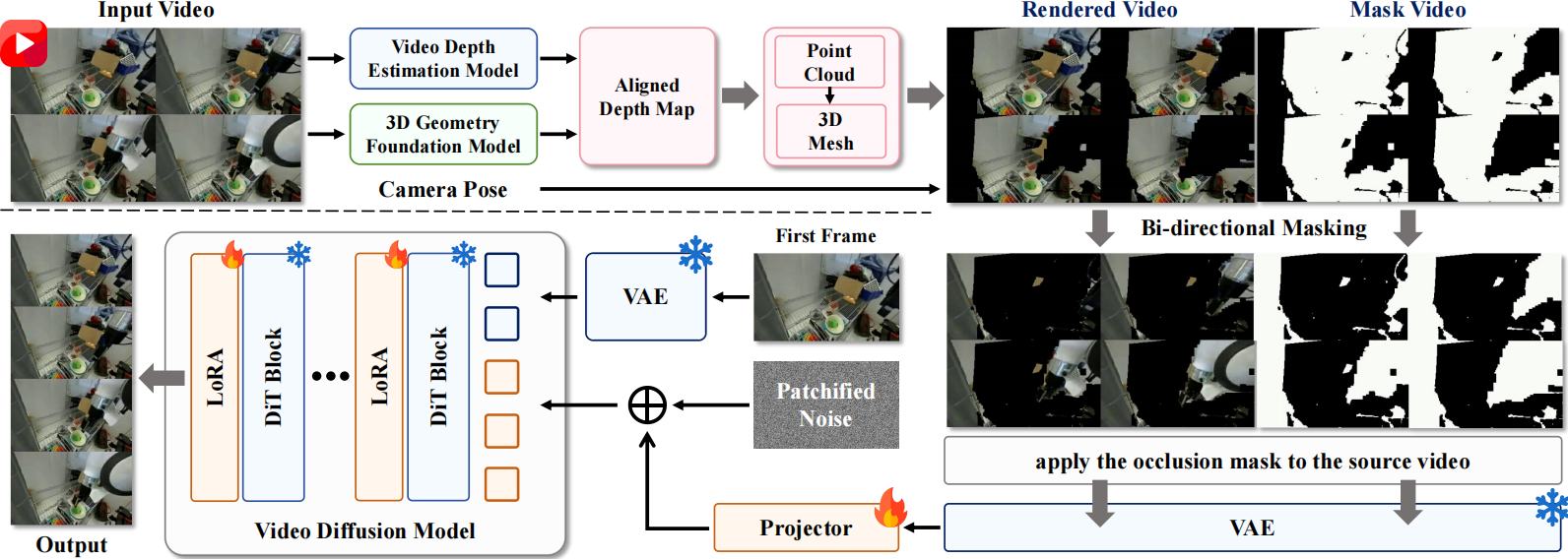

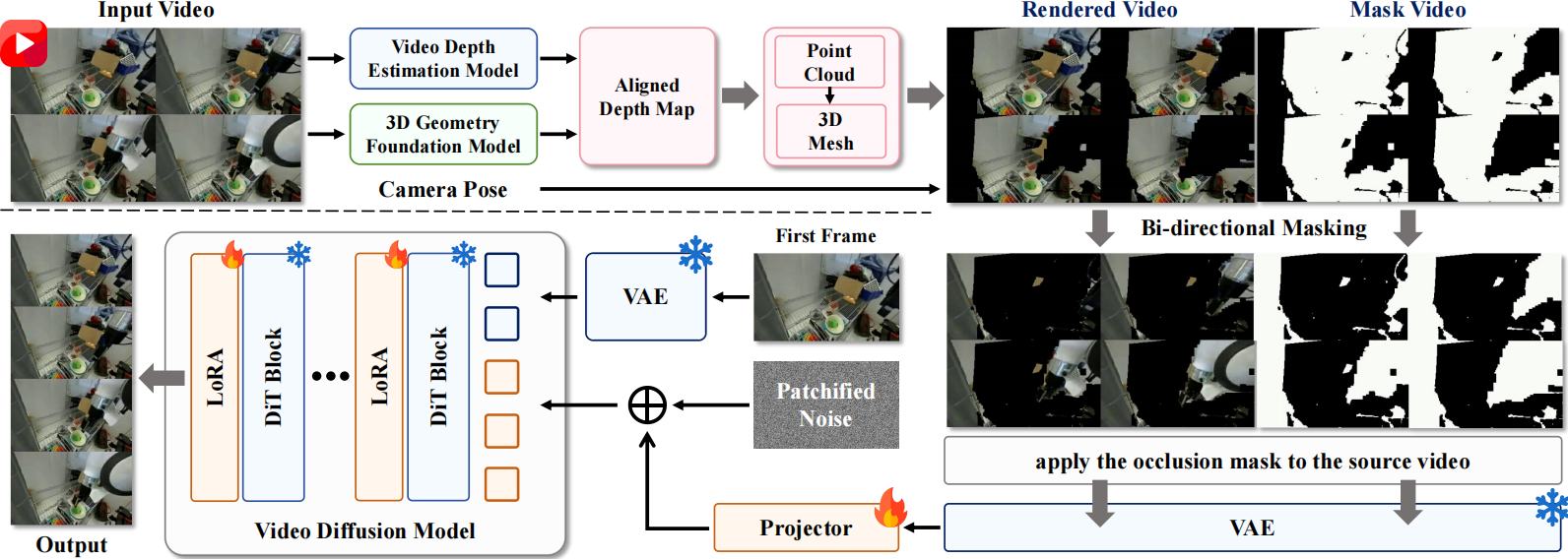

Does multi-view demonstration truly improve robot manipulation, or merely enhance cross-view robustness? This paper presents a systematic study quantifying the performance gains, scaling behavior, and underlying mechanisms of multi-view data for robot manipulation. Controlled experiments show that, under both fixed and randomized backgrounds, multi-view demonstrations consistently improve single-view policy success and generalization. Motivated by the importance of multi-view data and its scarcity in large-scale robotic datasets, the paper further proposes RoboNVS, a geometry-aware self-supervised framework that synthesizes novel-view videos from monocular inputs, and shows that the generated data consistently improves downstream policies in both simulation and real-world environments.

Beyond Viewpoint Generalization: What Multi-View Demonstrations Offer and How to Synthesize Them for Robot Manipulation?

Boyang Cai, Qiwei Liang, Jiawei Li, Shihang Weng, Zhaoxin Zhang, Tao Lin, Xiangyu Chen, Wenjie Zhang, Jiaqi Mao, Weisheng Xu, Bin Yang, Jiaming Liang, Junhao Cai, Renjing Xu

arXiv preprint 2026

Does multi-view demonstration truly improve robot manipulation, or merely enhance cross-view robustness? This paper presents a systematic study quantifying the performance gains, scaling behavior, and underlying mechanisms of multi-view data for robot manipulation. Controlled experiments show that, under both fixed and randomized backgrounds, multi-view demonstrations consistently improve single-view policy success and generalization. Motivated by the importance of multi-view data and its scarcity in large-scale robotic datasets, the paper further proposes RoboNVS, a geometry-aware self-supervised framework that synthesizes novel-view videos from monocular inputs, and shows that the generated data consistently improves downstream policies in both simulation and real-world environments.

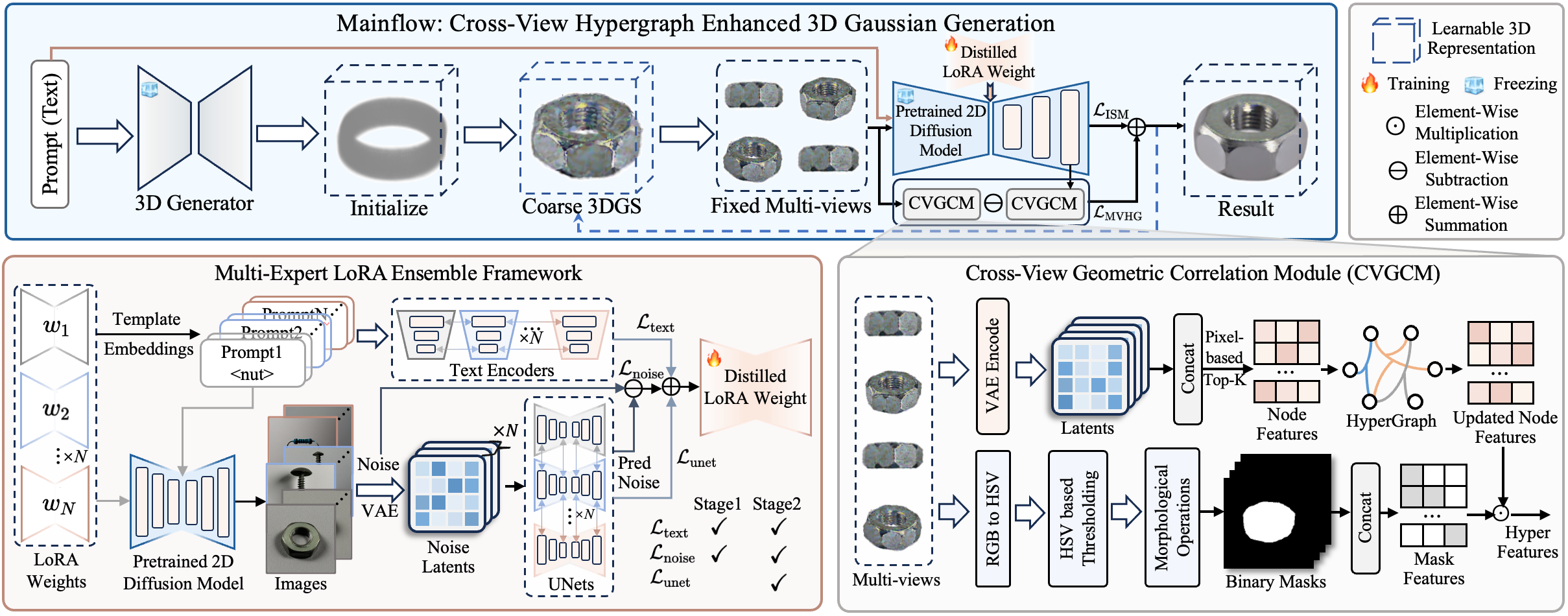

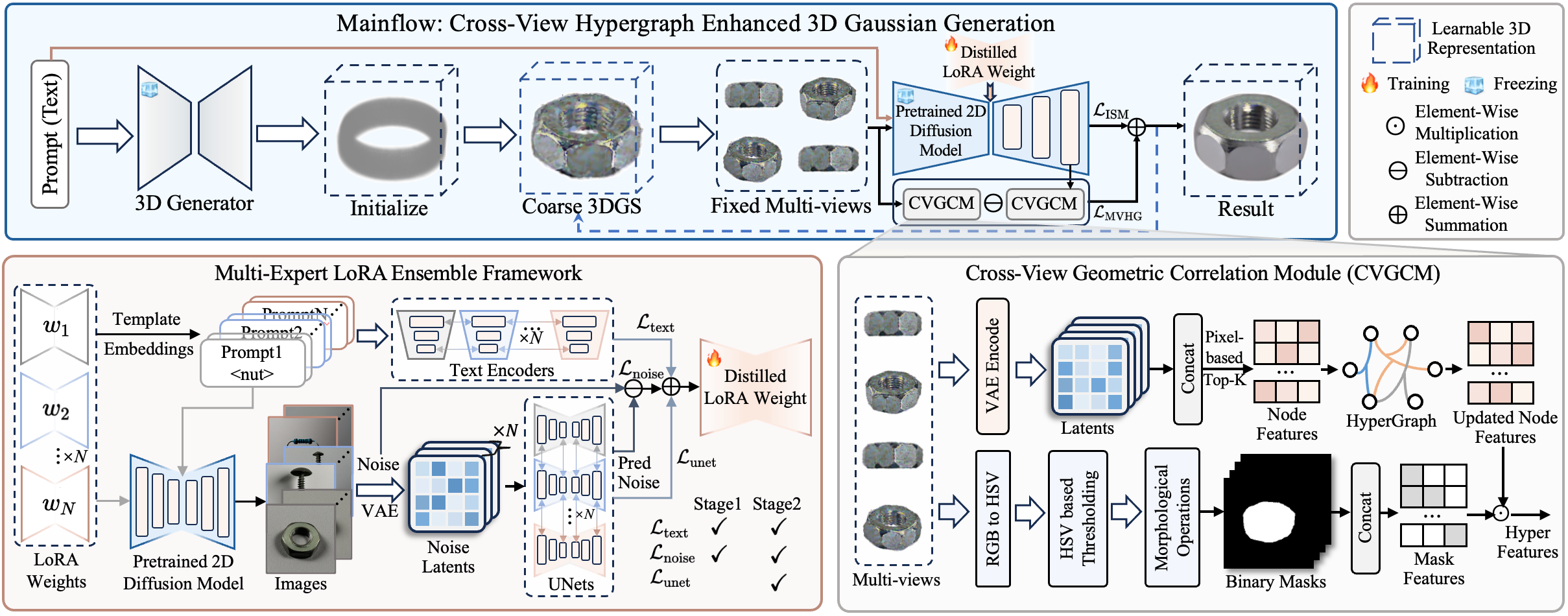

ForgeDreamer: Industrial Text-to-3D Generation with Multi-Expert LoRA and Cross-View Hypergraph

Junhao Cai*, Deyu Zeng*, Junhao Pang, Lini Li, Zongze Wu, Xiaopin Zhong (* equal contribution)

IEEE Conference on Computer Vision and Pattern Recognition (CVPR Findings) 2026

Current text-to-3D generation methods excel in natural scenes but struggle with industrial applications due to two critical limitations: domain adaptation challenges, where conventional LoRA fusion causes knowledge interference across categories, and geometric reasoning deficiencies, where pairwise consistency constraints fail to capture higher-order structural dependencies essential for precision manufacturing. We propose a novel framework named ForgeDreamer that addresses both challenges through two key innovations. First, we introduce a Multi-Expert LoRA Ensemble mechanism that consolidates multiple category-specific LoRA models into a unified representation, achieving superior cross-category generalization while eliminating knowledge interference. Second, building on enhanced semantic understanding, we develop a Cross-View Hypergraph Geometric Enhancement approach that captures structural dependencies spanning multiple viewpoints simultaneously. These components work synergistically: improved semantic understanding enables more effective geometric reasoning, while hypergraph modeling ensures manufacturing-level consistency. Extensive experiments on a custom industrial dataset demonstrate superior semantic generalization and enhanced geometric fidelity compared to state-of-the-art approaches. Our code and data are provided in the supplementary material attached in the appendix for review purposes.

ForgeDreamer: Industrial Text-to-3D Generation with Multi-Expert LoRA and Cross-View Hypergraph

Junhao Cai*, Deyu Zeng*, Junhao Pang, Lini Li, Zongze Wu, Xiaopin Zhong (* equal contribution)

IEEE Conference on Computer Vision and Pattern Recognition (CVPR Findings) 2026

Current text-to-3D generation methods excel in natural scenes but struggle with industrial applications due to two critical limitations: domain adaptation challenges, where conventional LoRA fusion causes knowledge interference across categories, and geometric reasoning deficiencies, where pairwise consistency constraints fail to capture higher-order structural dependencies essential for precision manufacturing. We propose a novel framework named ForgeDreamer that addresses both challenges through two key innovations. First, we introduce a Multi-Expert LoRA Ensemble mechanism that consolidates multiple category-specific LoRA models into a unified representation, achieving superior cross-category generalization while eliminating knowledge interference. Second, building on enhanced semantic understanding, we develop a Cross-View Hypergraph Geometric Enhancement approach that captures structural dependencies spanning multiple viewpoints simultaneously. These components work synergistically: improved semantic understanding enables more effective geometric reasoning, while hypergraph modeling ensures manufacturing-level consistency. Extensive experiments on a custom industrial dataset demonstrate superior semantic generalization and enhanced geometric fidelity compared to state-of-the-art approaches. Our code and data are provided in the supplementary material attached in the appendix for review purposes.

2025

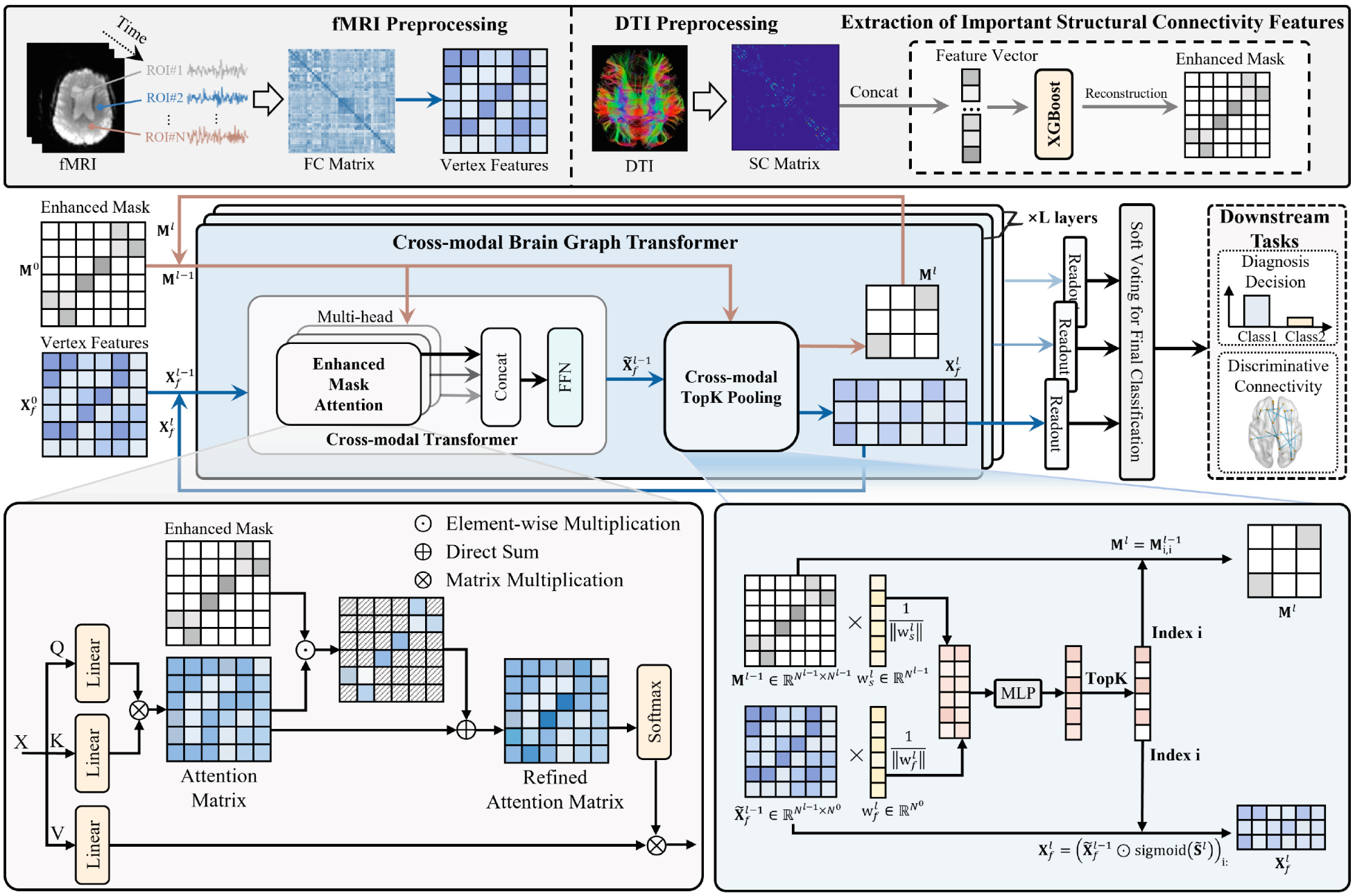

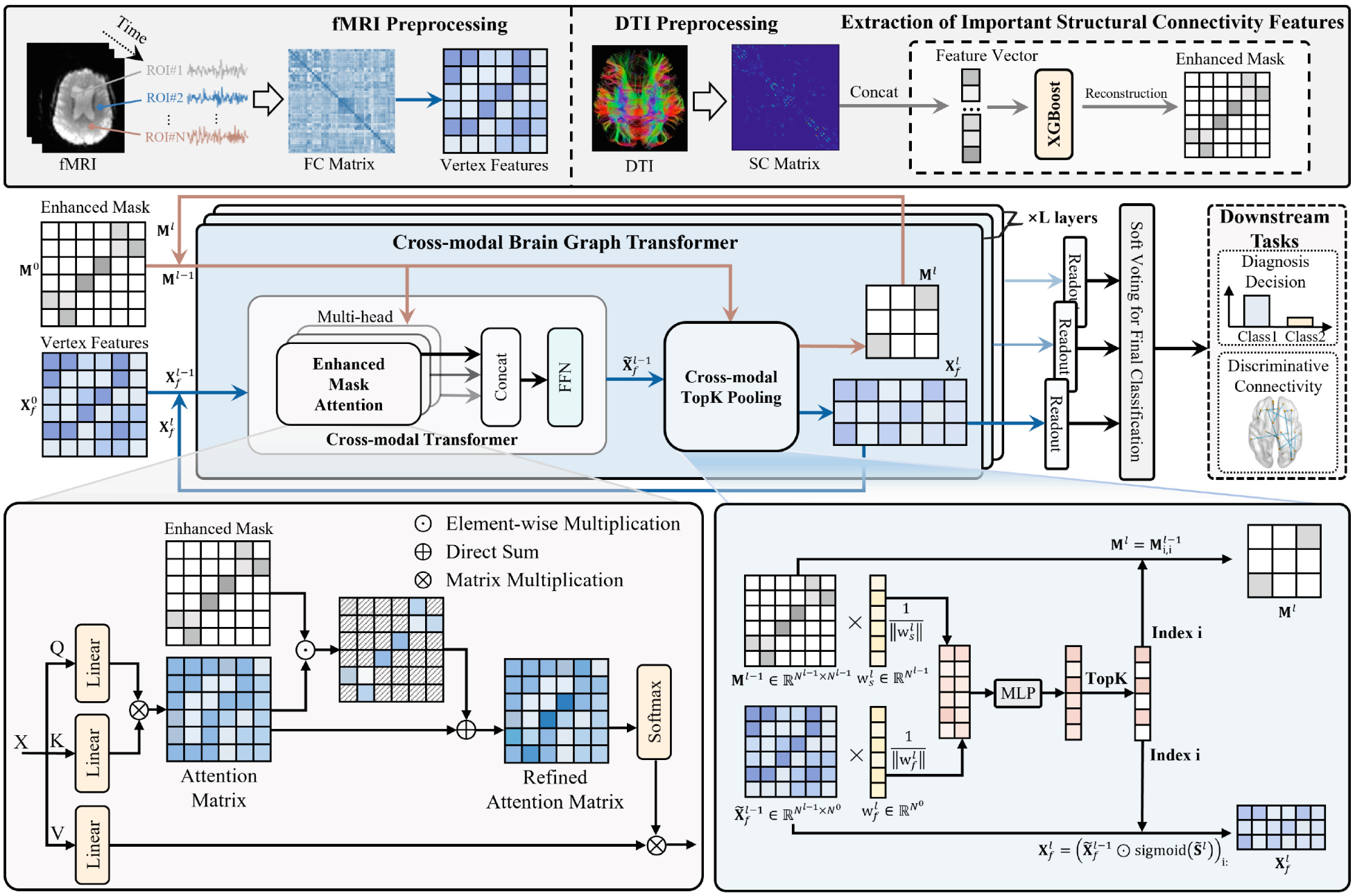

Cross-Modal Brain Graph Transformer via Function-Structure Connectivity Network for Brain Disease Diagnosis

Jingxi Feng, Heming Xu, Junhao Cai, Yujie Chang, Dong Zhang, Shaoyi Du, Juan Wang

Medical Image Computing and Computer Assisted Intervention (MICCAI) 2025

Multi-modal brain networks represent the complex connectivity between different brain regions from both functional and structural perspectives, which is of great significance for brain disease diagnosis. However, existing methods are limited to information fusion in the feature dimension, failing to fully exploit the complementary information between functional and structural connectivity networks. To address these issues, this paper proposes a cross-modal brain graph transformer (CBGT) method for brain disease diagnosis, which also provides an in-depth analysis of coupled function-structure connectivity networks. Specifically, CBGT consists of two main modules: the cross-modal Transformer module enhances the attention mechanism by utilizing structural connectivity features extracted through machine learning methods, capturing long-range dependencies in the cross-modal brain network. The cross-modal topK pooling module combines information from both functional and structural connectivity networks to select significant regions of interest (ROIs) during the reconstruction of the pooled graph, aiming to retain as much effective information as possible. Experiments conducted on the ABIDE and ADNI datasets demonstrate that the proposed method outperforms state-of-the-art approaches. Interpretation analysis reveals that the proposed method can identify multi-modal biomarkers associated with brain diseases.

Cross-Modal Brain Graph Transformer via Function-Structure Connectivity Network for Brain Disease Diagnosis

Jingxi Feng, Heming Xu, Junhao Cai, Yujie Chang, Dong Zhang, Shaoyi Du, Juan Wang

Medical Image Computing and Computer Assisted Intervention (MICCAI) 2025

Multi-modal brain networks represent the complex connectivity between different brain regions from both functional and structural perspectives, which is of great significance for brain disease diagnosis. However, existing methods are limited to information fusion in the feature dimension, failing to fully exploit the complementary information between functional and structural connectivity networks. To address these issues, this paper proposes a cross-modal brain graph transformer (CBGT) method for brain disease diagnosis, which also provides an in-depth analysis of coupled function-structure connectivity networks. Specifically, CBGT consists of two main modules: the cross-modal Transformer module enhances the attention mechanism by utilizing structural connectivity features extracted through machine learning methods, capturing long-range dependencies in the cross-modal brain network. The cross-modal topK pooling module combines information from both functional and structural connectivity networks to select significant regions of interest (ROIs) during the reconstruction of the pooled graph, aiming to retain as much effective information as possible. Experiments conducted on the ABIDE and ADNI datasets demonstrate that the proposed method outperforms state-of-the-art approaches. Interpretation analysis reveals that the proposed method can identify multi-modal biomarkers associated with brain diseases.